Chapter 4: Reflection

第 4 章:反思

Reflection Pattern Overview

反思模式概述

In the preceding chapters, we’ve explored fundamental agentic patterns: Chaining for sequential execution, Routing for dynamic path selection, and Parallelization for concurrent task execution. These patterns enable agents to perform complex tasks more efficiently and flexibly. However, even with sophisticated workflows, an agent’s initial output or plan might not be optimal, accurate, or complete. This is where the Reflection pattern comes into play.

在前面的章节中,我们探讨了基础的智能体模式:用于顺序执行的提示词链、用于动态路径选择的路由以及用于并发任务执行的并行化。这些模式使智能体能够更高效、更灵活地执行复杂任务。然而,即便采用复杂的工作流,智能体的初始输出或计划也可能并非最优、准确或完整。这正是反思模式发挥关键作用之处。

The Reflection pattern involves an agent evaluating its own work, output, or internal state and using that evaluation to improve its performance or refine its response. It’s a form of self-correction or self-improvement, allowing the agent to iteratively refine its output or adjust its approach based on feedback, internal critique, or comparison against desired criteria. Reflection can occasionally be facilitated by a separate agent whose specific role is to analyze the output of an initial agent.

反思模式是指智能体评估其自身工作、输出或内部状态,并利用该评估来提升性能或优化响应。这是一种自我纠正或自我改进机制,允许智能体基于反馈、内部评审或与预期标准的对比,迭代优化其输出或调整策略。反思有时可由专门的智能体来促进,其特定职责是分析初始智能体的输出。

Unlike a simple sequential chain where output is passed directly to the next step, or routing which chooses a path, reflection introduces a feedback loop. The agent doesn’t just produce an output; it then examines that output (or the process that generated it), identifies potential issues or areas for improvement, and uses those insights to generate a better version or modify its future actions.

与输出直接传递至下一步的简单顺序链或选择路径的路由不同,反思引入了反馈循环。智能体不仅产生输出,还会检查该输出(或其生成过程),识别潜在问题或改进空间,并运用这些洞察生成更优版本或调整后续行动。

The process typically involves:

该过程通常包含以下步骤:

- Execution: The agent performs a task or generates an initial output.

- Evaluation/Critique: The agent (often using another LLM call or a set of rules) analyzes the result from the previous step. This evaluation might check for factual accuracy, coherence, style, completeness, adherence to instructions, or other relevant criteria.

- Reflection/Refinement: Based on the critique, the agent determines how to improve. This might involve generating a refined output, adjusting parameters for a subsequent step, or even modifying the overall plan.

-

Iteration (Optional but common): The refined output or adjusted approach can then be executed, and the reflection process can repeat until a satisfactory result is achieved or a stopping condition is met.

- 执行: 智能体执行任务或生成初始输出

- 评估/评审: 智能体(通常通过另一个 LLM 调用或规则集)分析上一步结果。此评估可能涉及事实准确性、连贯性、风格、完整性、指令遵循度或其他相关标准

- 反思/优化: 基于评审意见,智能体确定改进方向。这可能包括生成优化后的输出、调整后续步骤参数,甚至修改整体计划

- 迭代(可选但常见): 优化后的输出或调整后的方法可继续执行,反思过程可重复进行,直至获得满意结果或达到停止条件

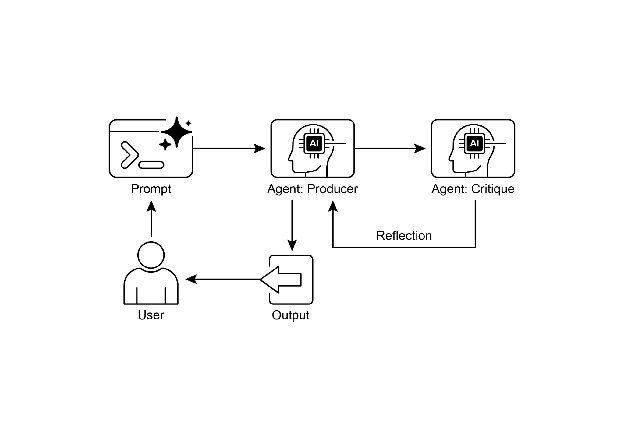

A key and highly effective implementation of the Reflection pattern separates the process into two distinct logical roles: a Producer and a Critic. This is often called the “Generator-Critic” or “Producer-Reviewer” model. While a single agent can perform self-reflection, using two specialized agents (or two separate LLM calls with distinct system prompts) often yields more robust and unbiased results.

反思模式的一种关键且高效的实现方式是将流程分离为两个不同的逻辑角色:生产者和评审者。这通常称为”生成器-评审者”或”生产者-审查者”模型。虽然单个智能体可执行自我反思,但使用两个专门智能体(或使用不同系统提示的两次独立LLM调用)通常会产生更稳健、更客观的结果。

1. The Producer Agent: This agent’s primary responsibility is to perform the initial execution of the task. It focuses entirely on generating the content, whether it’s writing code, drafting a blog post, or creating a plan. It takes the initial prompt and produces the first version of the output.

- 生产者智能体:此智能体的主要职责是执行任务的初始工作。它完全专注于内容生成,无论是编写代码、起草博客文章还是制定计划。它接收初始提示并生成输出的第一版。

2. The Critic Agent: This agent’s sole purpose is to evaluate the output generated by the Producer. It is given a different set of instructions, often a distinct persona (e.g., “You are a senior software engineer,” “You are a meticulous fact-checker”). The Critic’s instructions guide it to analyze the Producer’s work against specific criteria, such as factual accuracy, code quality, stylistic requirements, or completeness. It is designed to find flaws, suggest improvements, and provide structured feedback.

- 评审者智能体:此智能体的唯一目的是评估生产者生成的输出。它被赋予不同的指令集,通常承担特定角色(如”高级软件工程师”、”严谨的事实核查员”)。评审者的指令引导其根据特定标准分析生产者的工作,包括事实准确性、代码质量、风格要求或完整性。其设计目标是发现缺陷、提出改进建议并提供结构化反馈。

This separation of concerns is powerful because it prevents the “cognitive bias” of an agent reviewing its own work. The Critic agent approaches the output with a fresh perspective, dedicated entirely to finding errors and areas for improvement. The feedback from the Critic is then passed back to the Producer agent, which uses it as a guide to generate a new, refined version of the output. The provided LangChain and ADK code examples both implement this two-agent model: the LangChain example uses a specific “reflector_prompt” to create a critic persona, while the ADK example explicitly defines a producer and a reviewer agent.

这种关注点分离之所以强大,是因为它避免了智能体评审自身工作时可能产生的”认知偏差”。评审者智能体以全新视角处理输出,专注于发现错误和改进空间。评审者的反馈随后传回生产者智能体,生产者以此为指南生成新的优化版本输出。提供的LangChain和ADK代码示例均实现了这种双智能体模型:LangChain示例使用特定的”reflector_prompt”创建评审者角色,而ADK示例则明确定义了生产者和审查者智能体。

Implementing reflection often requires structuring the agent’s workflow to include these feedback loops. This can be achieved through iterative loops in code, or using frameworks that support state management and conditional transitions based on evaluation results. While a single step of evaluation and refinement can be implemented within either a LangChain/LangGraph, or ADK, or Crew.AI chain, true iterative reflection typically involves more complex orchestration.

实现反思通常需要在智能体工作流中构建包含这些反馈循环的结构。这可以通过代码中的迭代循环,或使用支持状态管理和基于评估结果的条件转换的框架来实现。虽然单步评估和优化可以在LangChain/LangGraph、ADK或Crew.AI链中完成,但真正的迭代反思通常涉及更复杂的编排。

The Reflection pattern is crucial for building agents that can produce high-quality outputs, handle nuanced tasks, and exhibit a degree of self-awareness and adaptability. It moves agents beyond simply executing instructions towards a more sophisticated form of problem-solving and content generation.

反思模式对于构建能够产生高质量输出、处理精细任务并展现一定程度自我意识和适应性的智能体至关重要。它推动智能体从单纯执行指令转向更复杂的问题解决和内容生成形式。

The intersection of reflection with goal setting and monitoring (see Chapter 11) is worth noticing. A goal provides the ultimate benchmark for the agent’s self-evaluation, while monitoring tracks its progress. In a number of practical cases, Reflection then might act as the corrective engine, using monitored feedback to analyze deviations and adjust its strategy. This synergy transforms the agent from a passive executor into a purposeful system that adaptively works to achieve its objectives.

值得注意的是反思与目标设定和监控(见第11章)的交叉点。目标为智能体的自我评估提供最终基准,而监控则跟踪其进展。在许多实际案例中,反思可能充当纠正引擎,利用监控反馈分析偏差并调整策略。这种协同使智能体从被动执行者转变为有目的的系统,能够自适应地工作以实现其目标。

Furthermore, the effectiveness of the Reflection pattern is significantly enhanced when the LLM keeps a memory of the conversation (see Chapter 8). This conversational history provides crucial context for the evaluation phase, allowing the agent to assess its output not just in isolation, but against the backdrop of previous interactions, user feedback, and evolving goals. It enables the agent to learn from past critiques and avoid repeating errors. Without memory, each reflection is a self-contained event; with memory, reflection becomes a cumulative process where each cycle builds upon the last, leading to more intelligent and context-aware refinement.

此外,当大语言模型(LLM)保持对话记忆(见第8章)时,反思模式的有效性会显著增强。对话历史为评估阶段提供了关键上下文,使智能体不仅能孤立地评估其输出,还能在先前交互、用户反馈和不断演变的目标背景下进行评估。它使智能体能够从过去的评审中学习并避免重复错误。没有记忆时,每次反思都是一个独立事件;有了记忆,反思成为一个累积过程,每个周期都建立在前一个周期的基础上,从而实现更智能、更具上下文感知的优化。

Practical Applications & Use Cases

实际应用与用例

The Reflection pattern is valuable in scenarios where output quality, accuracy, or adherence to complex constraints is critical:

反思模式在输出质量、准确性或对复杂约束的遵循度至关重要的场景中极具价值:

1. Creative Writing and Content Generation:

Refining generated text, stories, poems, or marketing copy.

- 创意写作与内容生成: 优化生成的文本、故事、诗歌或营销文案

- Use Case: An agent writing a blog post.

- Reflection: Generate a draft, critique it for flow, tone, and clarity, then rewrite based on the critique. Repeat until the post meets quality standards.

- Benefit: Produces more polished and effective content.

- 用例: 博客文章撰写智能体

- 反思: 生成草稿,评审其流畅性、语气和清晰度,然后基于评审重写。重复直至文章满足质量标准

- 优势: 产出更精炼有效的内容

2. Code Generation and Debugging:

Writing code, identifying errors, and fixing them.

- 代码生成与调试: 编写代码、识别错误并修复它们

- Use Case: An agent writing a Python function.

- Reflection: Write initial code, run tests or static analysis, identify errors or inefficiencies, then modify the code based on the findings.

- Benefit: Generates more robust and functional code.

- 用例: Python 函数编写智能体

- 反思: 编写初始代码,运行测试或静态分析,识别错误或低效之处,然后基于发现修改代码

- 优势: 生成更健壮实用的代码

3. Complex Problem Solving:

Evaluating intermediate steps or proposed solutions in multi-step reasoning tasks.

- 复杂问题解决: 在多步推理任务中评估中间步骤或提出的解决方案

- Use Case: An agent solving a logic puzzle.

- Reflection: Propose a step, evaluate if it leads closer to the solution or introduces contradictions, backtrack or choose a different step if needed.

- Benefit: Improves the agent’s ability to navigate complex problem spaces.

- 用例: 逻辑谜题求解智能体

- 反思: 提出一个步骤,评估它是否更接近解决方案或引入矛盾,必要时回溯或选择不同步骤

- 优势: 提升智能体导航复杂问题空间的能力

4. Summarization and Information Synthesis:

Refining summaries for accuracy, completeness, and conciseness.

- 摘要与信息综合: 优化摘要的准确性、完整性和简洁性

- Use Case: An agent summarizing a long document.

- Reflection: Generate an initial summary, compare it against key points in the original document, refine the summary to include missing information or improve accuracy.

- Benefit: Creates more accurate and comprehensive summaries.

- 用例: 长文档摘要智能体

- 反思: 生成初始摘要,与原始文档中的关键点对比,优化摘要以包含缺失信息或提高准确性

- 优势: 创建更准确全面的摘要

5. Planning and Strategy:

Evaluating a proposed plan and identifying potential flaws or improvements.

- 规划与策略: 评估提出的计划并识别潜在缺陷或改进点

- Use Case: An agent planning a series of actions to achieve a goal.

- Reflection: Generate a plan, simulate its execution or evaluate its feasibility against constraints, revise the plan based on the evaluation.

- Benefit: Develops more effective and realistic plans.

- 用例: 目标达成行动规划智能体

- 反思: 生成计划,模拟其执行或评估其在约束条件下的可行性,基于评估修订计划

- 优势: 制定更有效现实的计划

6. Conversational Agents:

Reviewing previous turns in a conversation to maintain context, correct misunderstandings, or improve response quality.

- 对话智能体: 审查对话历史轮次以保持上下文、纠正误解或提升响应质量

- Use Case: A customer support chatbot.

- Reflection: After a user response, review the conversation history and the last generated message to ensure coherence and address the user’s latest input accurately.

- Benefit: Leads to more natural and effective conversations.

- 用例: 客户支持聊天机器人

- 反思: 用户响应后,审查对话历史和最后生成消息,确保连贯性并准确回应用户最新输入

- 优势: 促成更自然有效的对话

Reflection adds a layer of meta-cognition to agentic systems, enabling them to learn from their own outputs and processes, leading to more intelligent, reliable, and high-quality results.

反思为智能体增加了元认知层,使其能从自身输出和过程中学习,从而产生更智能、可靠和高质量的结果。

Hands-On Code Example (LangChain)

实操代码示例(LangChain)

The implementation of a complete, iterative reflection process necessitates mechanisms for state management and cyclical execution. While these are handled natively in graph-based frameworks like LangGraph or through custom procedural code, the fundamental principle of a single reflection cycle can be demonstrated effectively using the compositional syntax of LCEL (LangChain Expression Language).

实现完整的迭代反思过程需要状态管理和循环执行机制。虽然这些在基于图的框架(如LangGraph)中是原生处理的,或可通过自定义程序代码实现,但单个反思周期的基本原理可通过LCEL(LangChain表达式语言)的组合语法有效演示。

This example implements a reflection loop using the Langchain library and OpenAI’s GPT-4o model to iteratively generate and refine a Python function that calculates the factorial of a number. The process starts with a task prompt, generates initial code, and then repeatedly reflects on the code based on critiques from a simulated senior software engineer role, refining the code in each iteration until the critique stage determines the code is perfect or a maximum number of iterations is reached. Finally, it prints the resulting refined code.

此示例使用LangChain库和OpenAI的GPT-4o模型实现反思循环,以迭代生成和优化计算数字阶乘的Python函数。该过程从任务提示开始,生成初始代码,然后基于模拟高级软件工程师角色的评审反复反思代码,在每次迭代中优化代码,直至评审阶段确认代码完美或达到最大迭代次数。最后打印优化后的代码。

First, ensure you have the necessary libraries installed:

首先确保安装必要库:

1

pip install langchain langchain-community langchain-openai

You will also need to set up your environment with your API key for the language model you choose (e.g., OpenAI, Google Gemini, Anthropic).

您还需要使用所选语言模型的 API 密钥配置环境(如 OpenAI、Google Gemini、Anthropic)。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

import os

from dotenv import load_dotenv

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.messages import SystemMessage, HumanMessage

## --- Configuration ---

## Load environment variables from .env file (for OPENAI_API_KEY)

load_dotenv()

## Check if the API key is set

if not os.getenv("OPENAI_API_KEY"):

raise ValueError("OPENAI_API_KEY not found in .env file. Please add it.")

## Initialize the Chat LLM. We use gpt-4o for better reasoning.

## A lower temperature is used for more deterministic outputs.

llm = ChatOpenAI(model="gpt-4o", temperature=0.1)

def run_reflection_loop():

"""

Demonstrates a multi-step AI reflection loop to progressively improve a Python function.

"""

# --- The Core Task ---

task_prompt = """

Your task is to create a Python function named `calculate_factorial`.

This function should do the following:

1. Accept a single integer `n` as input.

2. Calculate its factorial (n!).

3. Include a clear docstring explaining what the function does.

4. Handle edge cases: The factorial of 0 is 1.

5. Handle invalid input: Raise a ValueError if the input is a negative number.

"""

# --- The Reflection Loop ---

max_iterations = 3

current_code = ""

# We will build a conversation history to provide context in each step.

message_history = [HumanMessage(content=task_prompt)]

for i in range(max_iterations):

print("\n" + "="*25 + f" REFLECTION LOOP: ITERATION {i + 1} " + "="*25)

# --- 1. GENERATE / REFINE STAGE ---

# In the first iteration, it generates. In subsequent iterations, it refines.

if i == 0:

print("\n>>> STAGE 1: GENERATING initial code...")

# The first message is just the task prompt.

response = llm.invoke(message_history)

current_code = response.content

else:

print("\n>>> STAGE 1: REFINING code based on previous critique...")

# The message history now contains the task,

# the last code, and the last critique.

# We instruct the model to apply the critiques.

message_history.append(HumanMessage(content="Please refine the code using the critiques provided."))

response = llm.invoke(message_history)

current_code = response.content

print("\n--- Generated Code (v" + str(i + 1) + ") ---\n" + current_code)

message_history.append(response) # Add the generated code to history

# --- 2. REFLECT STAGE ---

print("\n>>> STAGE 2: REFLECTING on the generated code...")

# Create a specific prompt for the reflector agent.

# This asks the model to act as a senior code reviewer.

reflector_prompt = [

SystemMessage(content="""

You are a senior software engineer and an expert

in Python.

Your role is to perform a meticulous code review.

Critically evaluate the provided Python code based

on the original task requirements.

Look for bugs, style issues, missing edge cases,

and areas for improvement.

If the code is perfect and meets all requirements,

respond with the single phrase 'CODE_IS_PERFECT'.

Otherwise, provide a bulleted list of your critiques.

"""),

HumanMessage(content=f"Original Task:\n{task_prompt}\n\nCode to Review:\n{current_code}")

]

critique_response = llm.invoke(reflector_prompt)

critique = critique_response.content

# --- 3. STOPPING CONDITION ---

if "CODE_IS_PERFECT" in critique:

print("\n--- Critique ---\nNo further critiques found. The code is satisfactory.")

break

print("\n--- Critique ---\n" + critique)

# Add the critique to the history for the next refinement loop.

message_history.append(HumanMessage(content=f"Critique of the previous code:\n{critique}"))

print("\n" + "="*30 + " FINAL RESULT " + "="*30)

print("\nFinal refined code after the reflection process:\n")

print(current_code)

if __name__ == "__main__":

run_reflection_loop()

The code begins by setting up the environment, loading API keys, and initializing a powerful language model like GPT-4o with a low temperature for focused outputs. The core task is defined by a prompt asking for a Python function to calculate the factorial of a number, including specific requirements for docstrings, edge cases (factorial of 0), and error handling for negative input. The run_reflection_loop function orchestrates the iterative refinement process. Within the loop, in the first iteration, the language model generates initial code based on the task prompt. In subsequent iterations, it refines the code based on critiques from the previous step. A separate “reflector” role, also played by the language model but with a different system prompt, acts as a senior software engineer to critique the generated code against the original task requirements. This critique is provided as a bulleted list of issues or the phrase ‘CODE_IS_PERFECT’ if no issues are found. The loop continues until the critique indicates the code is perfect or a maximum number of iterations is reached. The conversation history is maintained and passed to the language model in each step to provide context for both generation/refinement and reflection stages. Finally, the script prints the last generated code version after the loop concludes.

代码首先设置环境,加载API密钥,并使用低温度初始化强大的语言模型(如GPT-4o)以获得聚焦的输出。核心任务由提示定义,要求创建计算数字阶乘的Python函数,包括对文档字符串、边界情况(0的阶乘)和负输入错误处理的特定要求。run_reflection_loop函数协调迭代优化过程。在循环中,第一次迭代时语言模型根据任务提示生成初始代码,后续迭代中则基于前一步的评审优化代码。单独的”反思者”角色(同样由语言模型扮演但使用不同系统提示)充当高级软件工程师,根据原始任务要求评审生成的代码。此评审以问题项目符号列表或短语’CODE_IS_PERFECT’(如无问题)形式提供。循环持续至评审指示代码完美或达到最大迭代次数。对话历史被维护并在每一步传递给语言模型,为生成/优化和反思阶段提供上下文。最后,脚本在循环结束后打印最终生成的代码版本。

Hands-On Code Example (ADK)

实操代码示例(ADK)

Let’s now look at a conceptual code example implemented using the Google ADK. Specifically, the code showcases this by employing a Generator-Critic structure, where one component (the Generator) produces an initial result or plan, and another component (the Critic) provides critical feedback or a critique, guiding the Generator towards a more refined or accurate final output.

现在让我们看一个使用Google ADK实现的概念性代码示例。具体而言,代码通过采用生成器-评审者结构来展示,其中一个组件(生成器)产生初始结果或计划,另一个组件(评审者)提供批判性反馈或评审,引导生成器朝向更优化或准确的最终输出。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

from google.adk.agents import SequentialAgent, LlmAgent

## The first agent generates the initial draft.

generator = LlmAgent(

name="DraftWriter",

description="Generates initial draft content on a given subject.",

instruction="Write a short, informative paragraph about the user's subject.",

output_key="draft_text" # The output is saved to this state key.

)

## The second agent critiques the draft from the first agent.

reviewer = LlmAgent(

name="FactChecker",

description="Reviews a given text for factual accuracy and provides a structured critique.",

instruction="""

You are a meticulous fact-checker.

1. Read the text provided in the state key 'draft_text'.

2. Carefully verify the factual accuracy of all claims.

3. Your final output must be a dictionary containing two keys:

- "status": A string, either "ACCURATE" or "INACCURATE".

- "reasoning": A string providing a clear explanation for your status, citing specific issues if any are found.

""",

output_key="review_output" # The structured dictionary is saved here.

)

## The SequentialAgent ensures the generator runs before the reviewer.

review_pipeline = SequentialAgent(

name="WriteAndReview_Pipeline",

sub_agents=[generator, reviewer]

)

## Execution Flow:

## 1. generator runs -> saves its paragraph to state['draft_text'].

## 2. reviewer runs -> reads state['draft_text'] and saves its dictionary output to state['review_output'].

This code demonstrates the use of a sequential agent pipeline in Google ADK for generating and reviewing text. It defines two LlmAgent instances: generator and reviewer. The generator agent is designed to create an initial draft paragraph on a given subject. It is instructed to write a short and informative piece and saves its output to the state key draft_text. The reviewer agent acts as a fact-checker for the text produced by the generator. It is instructed to read the text from draft_text and verify its factual accuracy. The reviewer’s output is a structured dictionary with two keys: status and reasoning. status indicates if the text is “ACCURATE” or “INACCURATE”, while reasoning provides an explanation for the status. This dictionary is saved to the state key review_output. A SequentialAgent named review_pipeline is created to manage the execution order of the two agents. It ensures that the generator runs first, followed by the reviewer. The overall execution flow is that the generator produces text, which is then saved to the state. Subsequently, the reviewer reads this text from the state, performs its fact-checking, and saves its findings (the status and reasoning) back to the state. This pipeline allows for a structured process of content creation and review using separate agents.Note: An alternative implementation utilizing ADK’s LoopAgent is also available for those interested.

此代码演示了在Google ADK中使用顺序智能体生成和审查文本。它定义了两个LlmAgent实例:generator和reviewer。generator智能体旨在创建关于给定主题的初始草稿段落,被指示撰写简短且信息丰富的文章,并将其输出保存至状态键draft_text。reviewer智能体作为生成文本的事实核查员,被指示从draft_text读取文本并验证其事实准确性。评审者的输出是包含两个键的结构化字典:status和reasoning。status指示文本为”ACCURATE”或”INACCURATE”,reasoning则提供状态解释。此字典保存至状态键review_output。创建名为review_pipeline的SequentialAgent来管理两个智能体的执行顺序,确保生成器先运行,然后是评审者。整体执行流程为:生成器产出文本并保存至状态,随后评审者从状态读取文本,执行事实核查,并将其发现(状态和推理)保存回状态。此管道允许使用独立智能体进行结构化内容创建和审查过程。注意: 对于感兴趣者,还提供了利用ADK的LoopAgent的替代实现。

Before concluding, it’s important to consider that while the Reflection pattern significantly enhances output quality, it comes with important trade-offs. The iterative process, though powerful, can lead to higher costs and latency, since every refinement loop may require a new LLM call, making it suboptimal for time-sensitive applications. Furthermore, the pattern is memory-intensive; with each iteration, the conversational history expands, including the initial output, critique, and subsequent refinements.

在结束前,需要考虑的是,虽然反思模式显著提升了输出质量,但也带来了重要的权衡。迭代过程虽然强大,但可能导致更高的成本和延迟,因为每个优化循环都可能需要新的LLM调用,这使其对于时间敏感的应用并非最优选择。此外,该模式内存密集;随着每次迭代,对话历史会扩展,包含初始输出、评审和后续优化。

At a Glance

速览

What: An agent’s initial output is often suboptimal, suffering from inaccuracies, incompleteness, or a failure to meet complex requirements. Basic agentic workflows lack a built-in process for the agent to recognize and fix its own errors. This is solved by having the agent evaluate its own work or, more robustly, by introducing a separate logical agent to act as a critic, preventing the initial response from being the final one regardless of quality.

问题背景: 智能体的初始输出往往次优,存在不准确、不完整或未能满足复杂要求的问题。基础智能体工作流缺乏让智能体识别和修复自身错误的内置流程。这通过让智能体评估自身工作,或更稳健地引入独立逻辑智能体充当评审者来解决,防止无论质量如何初始响应都成为最终结果。

Why: The Reflection pattern offers a solution by introducing a mechanism for self-correction and refinement. It establishes a feedback loop where a “producer” agent generates an output, and then a “critic” agent (or the producer itself) evaluates it against predefined criteria. This critique is then used to generate an improved version. This iterative process of generation, evaluation, and refinement progressively enhances the quality of the final result, leading to more accurate, coherent, and reliable outcomes.

解决方案: 反思模式通过引入自我纠正和优化机制提供了解决方案。它建立反馈循环,其中”生产者”智能体生成输出,然后”评审者”智能体(或生产者自身)根据预定义标准进行评估。随后使用此评审生成改进版本。这种生成、评估和优化的迭代过程逐步提升最终结果的质量,从而产生更准确、连贯和可靠的结果。

Rule of thumb: Use the Reflection pattern when the quality, accuracy, and detail of the final output are more important than speed and cost. It is particularly effective for tasks like generating polished long-form content, writing and debugging code, and creating detailed plans. Employ a separate critic agent when tasks require high objectivity or specialized evaluation that a generalist producer agent might miss.

实践建议: 当最终输出的质量、准确性和细节比速度和成本更重要时使用反思模式。它对生成精炼的长篇内容、编写和调试代码以及创建详细计划等任务特别有效。当任务需要通用生产者智能体可能遗漏的高客观性或专门评估时,使用独立评审者智能体。

Visual summary

可视化摘要

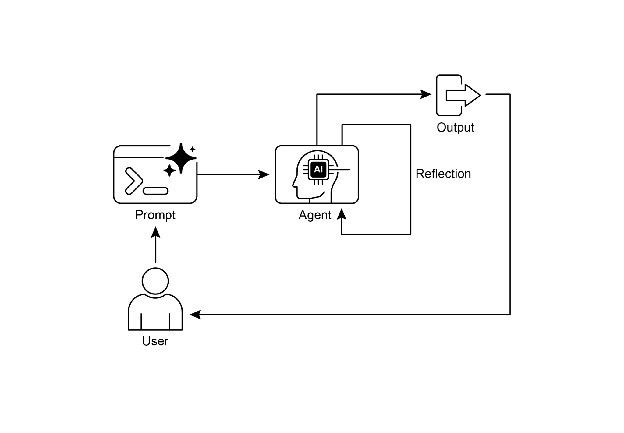

Fig. 1: Reflection design pattern, self-reflection

图 1:反思设计模式,自我反思

Fig.2: Reflection design pattern, producer and critique agent

图 2:反思设计模式,生产者和评审者智能体

Key Takeaways

关键要点

- The primary advantage of the Reflection pattern is its ability to iteratively self-correct and refine outputs, leading to significantly higher quality, accuracy, and adherence to complex instructions.

- It involves a feedback loop of execution, evaluation/critique, and refinement. Reflection is essential for tasks requiring high-quality, accurate, or nuanced outputs.

- A powerful implementation is the Producer-Critic model, where a separate agent (or prompted role) evaluates the initial output. This separation of concerns enhances objectivity and allows for more specialized, structured feedback.

- However, these benefits come at the cost of increased latency and computational expense, along with a higher risk of exceeding the model’s context window or being throttled by API services.

- While full iterative reflection often requires stateful workflows (like LangGraph), a single reflection step can be implemented in LangChain using LCEL to pass output for critique and subsequent refinement.

- Google ADK can facilitate reflection through sequential workflows where one agent’s output is critiqued by another agent, allowing for subsequent refinement steps.

-

This pattern enables agents to perform self-correction and enhance their performance over time.

- 反思模式的主要优势在于其能够迭代地自我纠正和优化输出,从而显著提高质量、准确性和对复杂指令的遵循度。

- 它涉及执行、评估/评审和优化的反馈循环。反思对需要高质量、准确或精细输出的任务至关重要。

- 一个强大的实现是生产者-评审者模型,其中独立智能体(或提示角色)评估初始输出。这种关注点分离增强了客观性,并支持更专业、结构化的反馈。

- 然而,这些优势是以增加的延迟和计算成本为代价的,同时伴随超出模型上下文窗口或被API服务限制的更高风险。

- 虽然完整的迭代反思通常需要有状态的工作流(如LangGraph),但单个反思步骤可在LangChain中使用LCEL实现,以将输出传递给评审和后续优化。

- Google ADK 可通过顺序工作流促进反思,其中一个智能体的输出被另一个智能体评审,允许后续优化步骤。

- 此模式使智能体执行自我纠正并随时间提升性能。

Conclusion

结论

The reflection pattern provides a crucial mechanism for self-correction within an agent’s workflow, enabling iterative improvement beyond a single-pass execution. This is achieved by creating a loop where the system generates an output, evaluates it against specific criteria, and then uses that evaluation to produce a refined result. This evaluation can be performed by the agent itself (self-reflection) or, often more effectively, by a distinct critic agent, which represents a key architectural choice within the pattern.

反思模式为智能体工作流中的自我纠正提供了关键机制,实现了超越单次执行的迭代改进。这通过创建一个循环来实现:系统生成输出,根据特定标准评估它,然后使用该评估产生优化结果。这种评估可以由智能体自身执行(自我反思),或者通常更有效地由不同的评审者智能体执行,这代表了模式内的一个关键架构选择。

While a fully autonomous, multi-step reflection process requires a robust architecture for state management, its core principle is effectively demonstrated in a single generate-critique-refine cycle. As a control structure, reflection can be integrated with other foundational patterns to construct more robust and functionally complex agentic systems.

虽然完全自主的多步反思过程需要强大的状态管理架构,但其核心原理在单个生成-评审-优化周期中得到了有效展示。作为一种控制结构,反思可以与其他基础模式集成,以构建更健壮和功能更复杂的智能体系统。

References

参考文献

Here are some resources for further reading on the Reflection pattern and related concepts:

以下是有关反思模式和相关概念的一些进一步阅读资源:

- Training Language Models to Self-Correct via Reinforcement Learning, https://arxiv.org/abs/2409.12917

- LangChain Expression Language (LCEL) Documentation: https://python.langchain.com/docs/introduction/

- LangGraph Documentation: https://www.langchain.com/langgraph

- Google Agent Developer Kit (ADK) Documentation (Multi-Agent Systems): https://google.github.io/adk-docs/agents/multi-agents/